Andrea Silverman

Multimodal AI Evaluation Systems

Frameworks for measuring identity consistency, motion realism, prompt fidelity, and temporal coherence in generative image and video models

Evaluation Framework | Core Evaluation | Model Analysis | Examples | Failure Mode

Overview

Generative video systems often produce visually compelling outputs that degrade across time due to identity drift, motion artifacts, or prompt misalignment.

This work focuses on defining structured evaluation criteria that make model behavior measurable and improvable across runs.

Evaluation Framework

The framework separates generative video quality into independently measurable dimensions, allowing targeted improvements without overfitting to a single aesthetic outcome.

Core Evaluation Dimensions

Identity Consistency

facial structure continuity

hair style persistence

wardrobe stability

skin tone continuity

Motion Realism

natural limb articulation

consistent motion speed

absence of temporal artifacts

Prompt Alignment

accurate interpretation of actions

emotional alignment with script intent

camera movement fidelity

Visual Coherence

lighting consistency

texture stability

environment continuity

Comparative Model Analysis

Structured comparisons reveal consistent strengths and weaknesses across models.

Evaluation Methodology Examples

Selected artifacts demonstrating structured evaluation of generative video systems across identity stability, motion coherence, prompt alignment, and audiovisual synchronization.

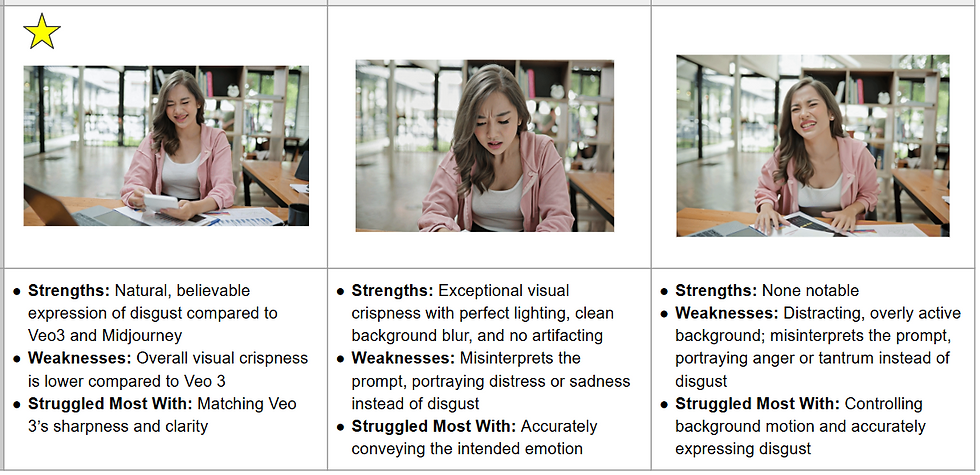

structured comparison of model emotion performance

structured comparison of model motion performance

structured comparison of model motion performance

Failure Mode Identification

Structured comparisons reveal consistent strengths and weaknesses across models.

Common failure modes include:

identity drift across frames

wardrobe or hairstyle instability

inconsistent lighting adaptation

emotional discontinuity

motion pacing artifacts

Example:

Character appearance changes unexpectedly due to underspecified identity anchors in prompt structure.

Insight:

Identity stability improves when prompts include persistent character attributes across shots.

Style-Dependent Identity Behavior

Certain visual styles inherently introduce identity preservation challenges.

Highly stylized rendering pipelines may reinterpret facial structure, texture continuity, or material consistency.

Evaluation criteria should adapt tolerance thresholds depending on visual abstraction level.

Example:

Claymation style introduces texture reinterpretation that may not indicate prompt failure.

Insight:

evaluation systems must distinguish stylistic transformation from identity loss.

Application to Generative Video Systems

These evaluation frameworks support iterative improvement loops in AI-generated media pipelines.

Outputs from evaluation layers inform:

prompt structure adjustments

persona constraint refinement

character definition clarity

timing optimization

This enables more reliable generation of dialogue-driven character videos.

Relationship to Persona Architecture

These evaluation frameworks support iterative improvement loops in AI-generated media pipelines.

Example Use Cases

These evaluation frameworks support iterative improvement loops in AI-generated media pipelines.

Outputs from evaluation layers inform:

AI-generated dialogue videos

character-driven recommendation content

synthetic spokesperson systems

multimodal conversational interfaces

Visual Consistency Controls for Multimodal Training Data

White Balance Normalization

BEFORE | AFTER

Skin Tone Fidelity

BEFORE | AFTER

Exposure Normalization

BEFORE | AFTER

Cinematic Color Grading

BEFORE | AFTER

Style Consistency Across Images

BEFORE | AFTER

Example transformations used to expand dataset diversity while preserving identity consistency and lighting coherence:

• illumination normalization

• tonal curve alignment

• color cast correction

• contrast harmonization